Introduction

School assessment has long created a two-part burden: teachers spend hours designing tests and grading student work, while students wait days—sometimes weeks—for feedback to arrive. By the time results reach them, the learning moment has already passed, and misconceptions quietly take root.

AI is beginning to close this feedback loop in classrooms across India and globally, delivering near-instant evaluation and enabling timely intervention before students fall further behind.

The shift is accelerating rapidly. The global AI in K-12 education market was valued at USD 390.8 million in 2024 and is projected to reach USD 7,949.9 million by 2033, growing at 38.1% annually. In India specifically, the market stood at USD 12.6 million in 2024, with projections reaching USD 280.9 million by 2033—a 39.5% compound annual growth rate that outpaces even the global average. Schools are already adopting these tools, and early movers are seeing measurable gains in student outcomes.

Many school leaders remain curious but unclear: how do these tools actually work? What happens between the moment a student submits an answer and the moment they receive feedback? This guide breaks down the mechanics in plain terms: how the AI reads a student's response, evaluates it, and surfaces the kind of insight a teacher can actually act on.

TLDR

- AI-based assessment tools use machine learning and natural language processing to evaluate student responses, identify learning gaps, and deliver instant feedback—without waiting for manual grading

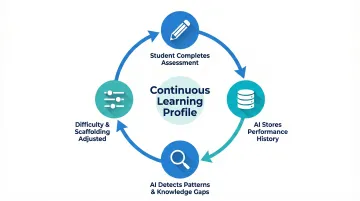

- The process flows from assessment initiation → AI evaluation of student input → adaptive difficulty calibration → actionable outputs for students, teachers, and parents

- Unlike static tests, AI assessments adapt in real time—adjusting question difficulty or flagging weak areas based on live student performance

- The deeper value is the data trail: teachers can see exactly which students are struggling with which concepts and deliver personalised instruction accordingly

What Is an AI-Based Assessment Tool for Schools?

An AI-based assessment tool is software that uses artificial intelligence — natural language processing, adaptive algorithms, and predictive analytics — to create, administer, evaluate, and report on student assessments. It automates the mechanical layer of evaluation while producing detailed performance insights that guide instructional decisions.

The Problem It Was Built to Solve

Traditional assessments create a time lag between when a student answers a question and when they receive corrective feedback. This delay weakens learning retention. John Hattie's meta-analysis ranks feedback at effect size d=0.70 — well above the 0.40 threshold for meaningful impact — yet in conventional testing, that feedback often arrives days later.

AI tools close this gap by processing responses instantly, while the concept is still active in the student's mind.

What It Is Not

An AI assessment tool is not a replacement for teacher judgment. It handles the repetitive layer of evaluation — pattern recognition, scoring, gap detection — while leaving the relational and interpretive role firmly with the teacher. The AI identifies where a student broke down; the teacher decides how to reteach and support that student's learning path.

Types of Assessments Supported

AI tools typically support three assessment categories:

- Formative assessments: Ongoing checks for understanding during instruction

- Summative assessments: End-of-unit evaluations measuring cumulative learning

- Diagnostic assessments: Baseline tests to identify where a student starts

The underlying AI process differs slightly across these types. Adaptive difficulty adjustment, for instance, is more common in formative and diagnostic modes, while summative assessments focus more on comprehensive scoring and reporting against learning objectives.

How Does an AI-Based Assessment Tool Work?

An AI-based assessment tool doesn't operate as a single action. It runs through a defined sequence of stages, each contributing to the accuracy, relevance, and usefulness of the final output for teachers, students, and parents.

Initiation: How the Assessment Begins

An assessment session is triggered when a teacher assigns a quiz, sets a learning checkpoint, or a student reaches a module-end in a digital learning path. Initiation can be:

- Manual: Teacher-assigned quiz or test

- Automated: Triggered by progress milestones within a learning management system

- Student-self-initiated: Practice mode or self-assessment

At this stage, the system collects critical context: student identity, grade level, subject, prior performance history, and the specific learning objectives the assessment is designed to test. This context determines everything that follows, from the difficulty of the first question to how feedback is framed.

Core Processing: How the AI Reads and Evaluates Responses

Once a student submits a response, the core engine uses natural language processing (NLP) to interpret written answers. Rather than checking for a matching keyword, it analyses meaning, reasoning structure, and conceptual accuracy. For multiple-choice or numeric responses, pattern recognition algorithms handle evaluation.

NLP-based educational assessment has matured significantly. Tools like ETS's e-rater engine analyse text at semantic and discourse levels, evaluating:

- Grammar and sentence coherence

- Logical structure and argumentation flow

- Conceptual depth, not just surface-level keyword presence

This allows the AI to assess not just whether a student got an answer right, but how they arrived at their conclusion.

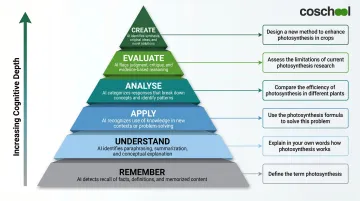

Many AI assessment tools align responses to a cognitive framework such as Bloom's Taxonomy, which categorises thinking into six levels: Remember, Understand, Apply, Analyse, Evaluate, and Create. The AI determines at what depth of understanding a student is operating. A student who recalls a definition sits at a different cognitive level than one who applies that concept to a novel problem.

This stage also involves rubric matching: the AI evaluates written responses against pre-loaded criteria, flagging where a student's answer meets, partially meets, or misses the benchmark. This enables specific, targeted feedback — "Your explanation missed the cause-and-effect relationship" — rather than a generic "incorrect" label.

Adaptive Calibration: How the AI Adjusts in Real Time

As the student progresses through an assessment, the AI continuously re-evaluates their performance pattern. If a student answers several questions correctly in a row, difficulty increases. If errors cluster around a specific concept, the tool flags it as a knowledge gap and may introduce reinforcing questions to probe deeper.

This calibration prevents two common failures of traditional assessment: under-challenging strong students and overwhelming struggling ones. In conventional settings, neither problem surfaces until exam results return weeks later. Research on adaptive digital learning systems shows a significant positive effect on academic performance (Hedges' g = 0.606, p < 0.001) compared to traditional classroom learning, validating the effectiveness of real-time difficulty adjustment.

Output and Reporting: What Gets Delivered and to Whom

At the end of an assessment session, the tool produces multiple outputs:

- For students: Instant, detailed feedback — what they got right, what they missed, and why

- For teachers: A performance summary showing class-wide and individual results, including which concepts need reteaching

- For parents: (In integrated platforms) A report or alert about their child's progress or areas of concern

This output integrates into the broader teaching workflow. Teachers use the class-wide data to identify which concepts need reteaching, group students for targeted support, or adjust lesson pacing. Assessment data becomes the starting point for the next teaching decision, not just a record of past performance.

Platforms like Coschool are designed around this principle, using Generative AI to create a closed-loop learning ecosystem. The workflow operates as follows: teachers assign homework, the AI tutor (Vin) guides students during homework and self-study, Vin shares actionable insights on student performance with teachers, and teachers implement student-specific interventions. This continuous cycle transforms assessment from a measurement event into a teaching tool.

What Makes AI Assessment Different from Traditional Testing

Feedback Timing: The Learning Moment Advantage

In a traditional paper-based or manually graded test, feedback arrives days later—after the student has mentally moved on. AI assessment delivers feedback in the moment, while the concept is still active in the student's mind. This timing difference has measurable consequences. A study found that students express significantly lower levels of motivation if feedback took greater than 10 days, establishing a clear threshold for feedback delay harm.

Timely, specific feedback enables students to correct misconceptions before they consolidate into long-term memory. Delayed feedback allows errors to become embedded—requiring far more effort to unlearn later.

From Measurement to Diagnosis: Personalised Learning at Scale

Traditional assessments measure what a student got right or wrong. AI assessments reveal where in the cognitive process the student broke down—and flag patterns across an entire class. This transforms assessment from a tool for measurement into a tool for teaching.

A teacher grading 35 essays manually might notice that several students struggled with thesis statements—but by the time the remaining papers are graded and a reteaching session planned, a week has passed. An AI assessment tool identifies the same pattern within minutes of the last submission, enabling same-day intervention.

The Teacher's Changed Role: From Scorer to Strategist

The grading burden on teachers is severe and well-documented. The pattern holds globally. Research from the U.S. illustrates the scale:

- U.S. teachers spend an average of 9.9 hours per week grading—more than a full workday—and 32% have considered leaving the profession due to grading workload

- 84% of K-12 teachers say they lack enough time during regular work hours for grading, lesson planning, and paperwork

AI assessment tools directly target this time deficit. Instead of spending hours scoring, teachers receive a ready-made action plan.

This shift is particularly significant in Indian classrooms, where teacher-to-student ratios make personalised attention structurally difficult. India's UDISE+ 2022-23 report shows a pupil-teacher ratio of 23:1 at the secondary level, with states like Bihar and Uttar Pradesh exceeding national averages.

With 248 million students enrolled nationally, even these ratios represent enormous absolute numbers per teacher. Individualised assessment feedback is impractical at that scale without technological support.

Where AI Assessment Tools Are Used in Schools

AI assessment tools fit naturally into multiple points in the school day and academic calendar:

During lesson check-ins: Formative micro-assessments after a concept is taught allow teachers to gauge understanding before moving forward. These quick checks generate immediate data about which students grasped the concept and which need additional support.

At the end of a unit: Summative evaluations measure cumulative learning and provide a comprehensive view of student mastery across multiple objectives.

At the start of a term: Diagnostic baseline testing identifies where each student starts, enabling teachers to differentiate instruction from day one.

As homework or practice assignments: Self-directed assessments that generate performance data, allowing students to practice independently while teachers monitor progress remotely.

Optimal Environments for AI Assessment

AI assessment tools perform best in:

- Digital-first or blended classrooms where students interact with content on a device

- Schools with a central LMS (Learning Management System) or integrated learning platform

- Schools running personalised or adaptive learning programs

The tool's effectiveness increases with access to longitudinal student data. The more history it has, the sharper its gap detection and difficulty calibration. A system that knows a student struggled with fractions six months ago can adjust its response when that student encounters a related concept in geometry — providing scaffolding that a one-time snapshot assessment simply cannot.

Different Stakeholders, Different Value

AI assessment tools deliver distinct value to each group in the school ecosystem:

| Stakeholder | Primary Value |

|---|---|

| Students | Learning companion delivering instant feedback and adaptive support |

| Teachers | Planning and reporting assistant that identifies gaps and recommends interventions |

| School Administrators | Curriculum performance tracker providing institutional-level insights |

| Parents | Visibility into their child's progress, closing the home-school communication loop |

This multi-stakeholder value carries particular weight in Indian schools, where parent engagement has traditionally been confined to report card reviews and annual parent-teacher meetings.

AI assessment tools change that dynamic. Parents gain ongoing visibility into their child's progress — not just once a term, but week to week — making it practical for home and school learning to reinforce each other.

Conclusion

AI-based assessment tools work because they replicate the feedback cycle that great teaching always required—respond, evaluate, adjust, and explain—but they do it at a speed and scale no human grader can match alone. The mechanics are grounded in proven technologies: natural language processing that reads student responses at semantic depth, adaptive algorithms that calibrate difficulty in real time, and reporting systems that turn performance data into actionable instructional decisions.

Understanding how these tools work translates directly into better decision-making. When educators can see the NLP layer interpreting student reasoning, the adaptive calibration preventing under-challenge or overwhelm, and the output loop connecting assessment data to teaching action, they are better equipped to select the right platform and set realistic expectations—and more likely to use the data it generates to genuinely improve outcomes.

Across India, AI adoption in schools is accelerating while infrastructure continues to develop—making the choice of platform more consequential, not less. Schools that adopt evidence-backed AI assessment tools now, prioritizing immediate feedback delivery, rubric-aligned evaluation, and adaptive calibration, will be well-placed to benefit as the evidence base deepens and the market matures.

The real opportunity here isn't simply faster grading. It's closing learning gaps before they compound, personalizing instruction at scale, and turning assessment into something continuous rather than episodic.

Frequently Asked Questions

What is an AI-based assessment tool for schools?

An AI-based assessment tool is software that uses natural language processing and adaptive algorithms to create, administer, and evaluate student assessments automatically—delivering instant feedback to students and actionable performance data to teachers.

How does an AI assessment tool give personalized feedback to each student?

The AI analyzes each student's specific response against rubrics and cognitive frameworks, identifies exactly where the reasoning broke down, and generates feedback tailored to that individual error. Rather than a generic "wrong answer" message, students get specific guidance on what was missed and why.

Can AI assessment tools handle both formative and summative assessments?

Yes. Formative assessments use real-time pacing adjustments and continuous feedback to guide learning as it happens. Summative assessments shift toward comprehensive scoring and performance reporting against learning objectives.

Does using an AI assessment tool replace the teacher's role in evaluation?

No. AI handles the mechanical layer—scoring, pattern detection, gap flagging—but the teacher's role in interpreting results, making instructional decisions, and providing human connection remains irreplaceable. The tool surfaces the data; what a teacher does with it is where the real judgment lives.

How does an AI assessment tool help identify learning gaps?

The AI tracks which concepts a student repeatedly struggles with across questions and sessions, then builds a performance profile highlighting specific knowledge gaps. This lets teachers intervene early—well before a gap surfaces during summative testing weeks later.